Computational design is often presented in purely technical terms without an understanding of the logic and theory behind the process. For example, it is frequent to go to lectures or presentations, whereby the presenter will demonstrate how to do XYZ in a particular software. However, this results in only a superficial understanding of computational design and further undermines its adoption, as it is portrayed as a tool rather than as a philosophy of design. This article seeks to address this issue through the (brief) explanation of common terminology used in computational design. The terms have been loosely grouped them into six taxonomies: Geometry, mathematical algorithms, natural systems, computation, process and fabrication.

Geometry

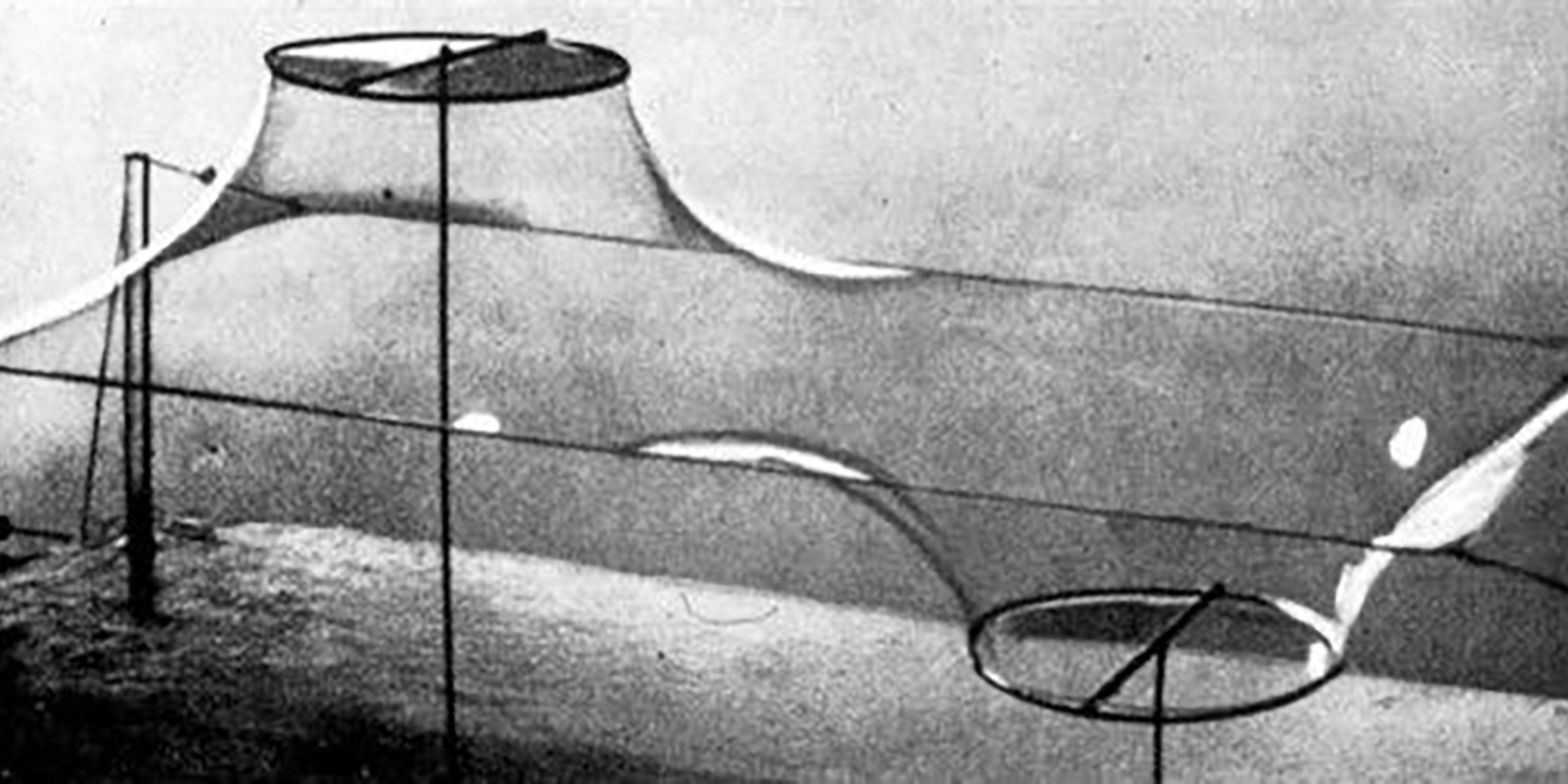

Minimal surface

A minimal surface is a surface that locally minimizes its area. This is equivalent to having a mean curvature of zero. The term ‘minimal surface’ is used because these surfaces originally arose as surfaces that minimised total surface area subject to some constraint. Physical models of area-minimising minimal surfaces, such as those by Frei Otto, can be made by dipping a wire frame into a soap solution, forming a soap film.

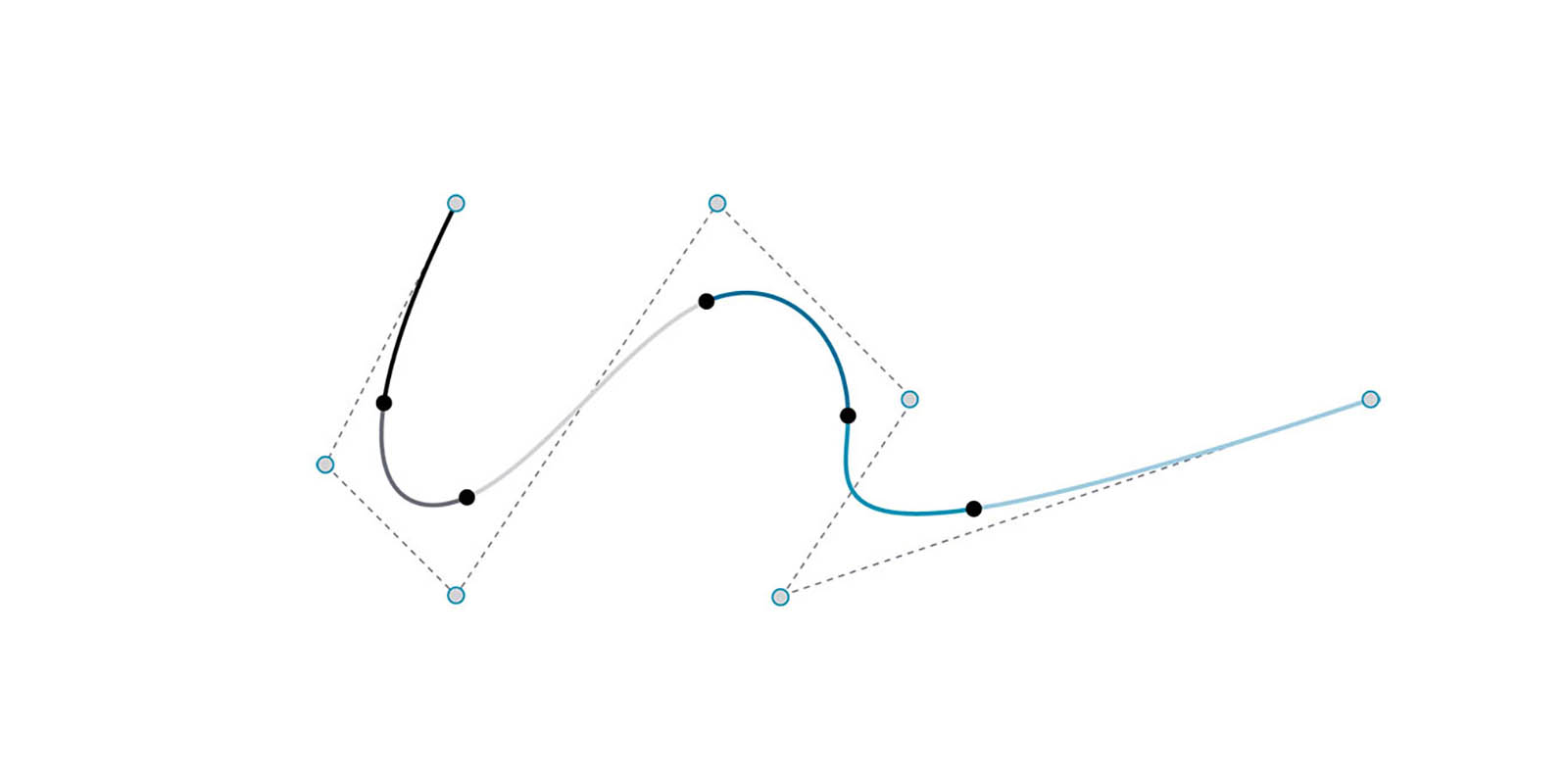

NURBS curves

Non-Uniform Rational B-Splines are mathematical representations that can accurately model any shape from a simple two-dimensional line, circle, arc, or rectangle to the most complex three-dimensional free-form organic curve.

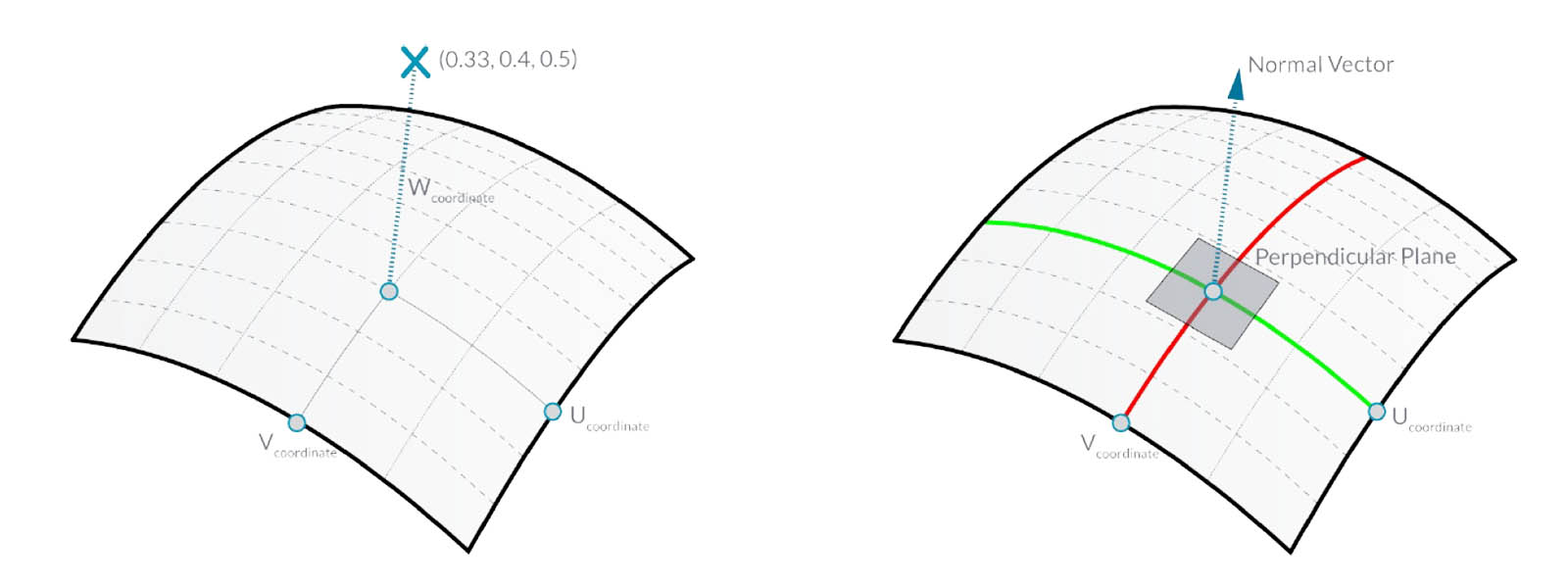

NURBS surfaces

You can think of NURBS surfaces as a grid of NURBS curves that go in two directions. The shape of a NURBS surface is defined by a number of control points and the degree of that surface in the u and v directions. NURBS surfaces are efficient for storing and representing free-form surfaces with a high degree of accuracy.

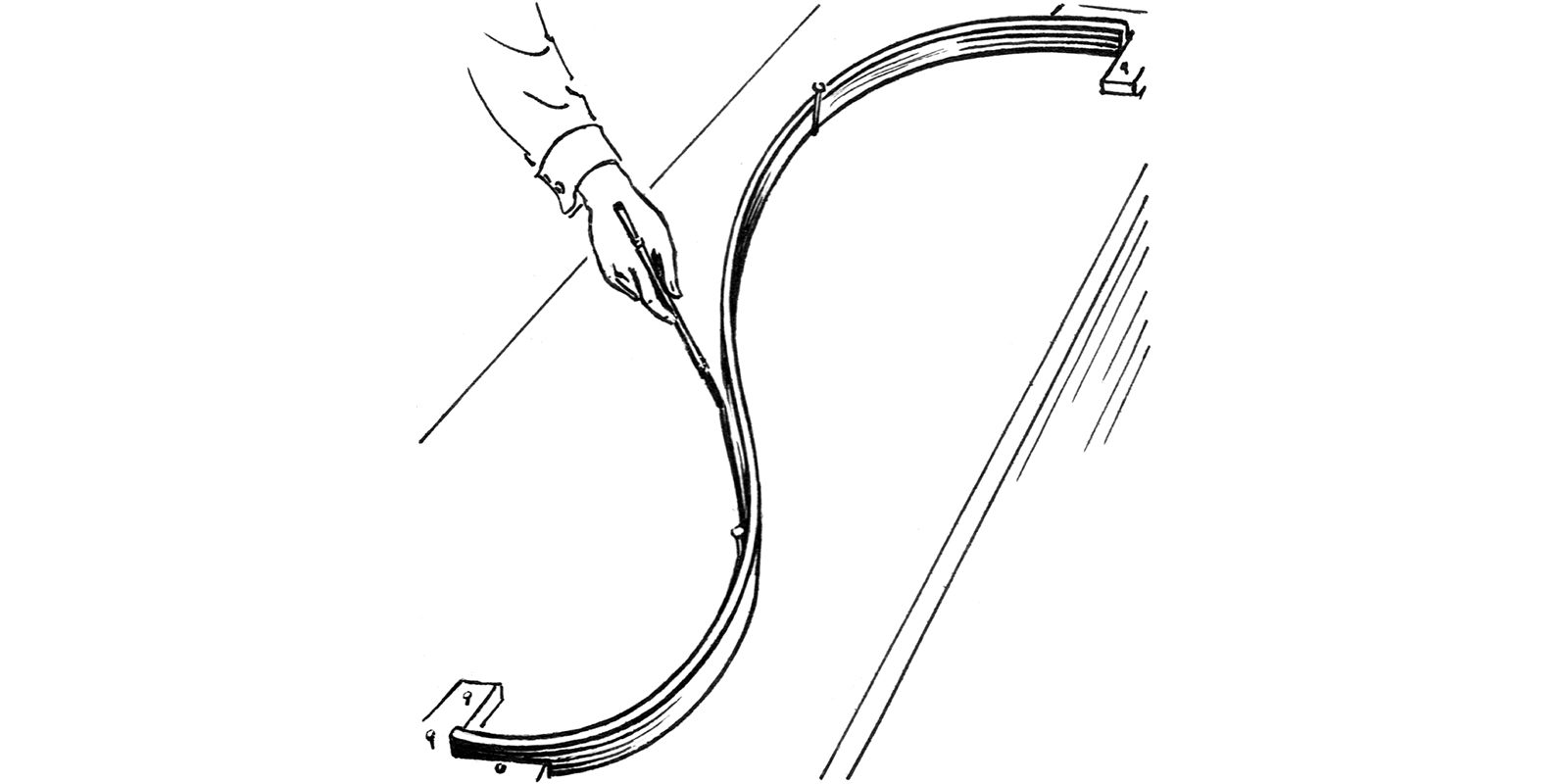

Spline

The term derives from the technical lexicon of shipbuilding, where it describes slats of woods that were bent and nailed to the timber frame of the hull. A spline is thus the smoothest line joining a number of fixed points.

Topology

Topology is the mathematical study of the properties that are preserved through deformations, twistings, and stretchings of objects. Tearing, however, is not allowed.

Mathematical algorithms

Cellular Automata

A cellular automaton consists of a regular grid of cells, each in one of a finite number of states, such as on and off. The grid can be in any finite number of dimensions. For each cell, a set of cells called its neighbourhood is defined relative to the specified cell. An initial state (time t = 0) is selected by assigning a state for each cell. A new generation is created (advancing t by 1), according to some fixed rule that determines the new state of each cell in terms of the current state of the cell and the states of the cells in its neighbourhood.

Fractals

A fractal is a never-ending pattern. Fractals are infinitely complex patterns that are self-similar across different scales. They are created by repeating a simple process over and over in an ongoing feedback loop.

L-Systems

Developed in 1968 by Aristid Lindenmayer, an L-system or Lindenmayer system consists of an alphabet of symbols that can be used to make strings, a collection of production rules which expand each symbol into some larger string of symbols, an initial ‘axiom’ string from which to begin construction, and a mechanism for translating the generated strings into geometric structures. The derivation strings of developing L-systems can be interpreted as a linear sequence of instructions to a ‘turtle’, which interprets the instructions as movement and geometry building actions.1

Axiom: FX

Production rules: X=X+YF+

Y=-FX-Y

Iteration 0: FX

Iteration 1: FX+YF+

Iteration 2: FX+YF++-FX-YF+

Iteration 3: FX+YF++-FX-YF++-FX+YF+--FX-YF+Voronoi

Named after Georgy Voronoi, a Voronoi diagram is a partitioning of a plane into regions based on the distance to points in a specific subset of the plane. That set of points (called seeds, sites, or generators) is specified beforehand, and for each seed, there is a corresponding region consisting of all points closer to that seed than to any other. These regions are called Voronoi cells.

Natural systems

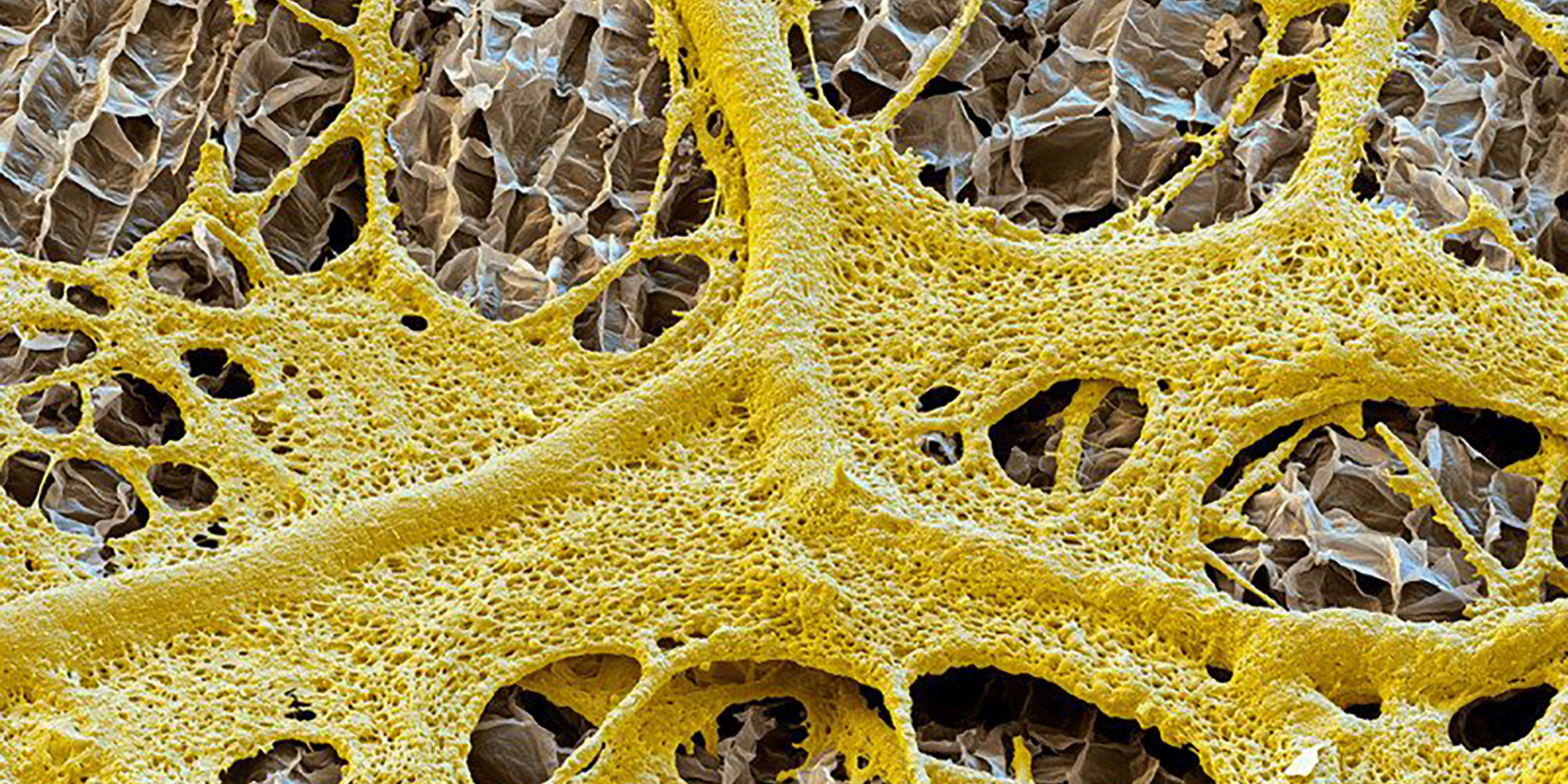

Biomimetics

Biomimetics or biomimicry is the imitation of the models, systems, and elements of nature for the purpose of solving complex human problems. It has emerged as an important field of research and is having particular resonance in morphogenetic architecture.2 Nature as a form of inspiration is not something new to architecture – one only has to look at the works of Frank Lloyd Wright or Antonio Gaudí to see an attempt to harmonise the natural and the built world. These organic architects drew inspiration from nature’s forms and structures.3 Indeed, as the engineer Fred Severud claims ‘…it is a fact that the contemporary architect or engineer faces few problems in structural design which Nature has not already met and solved’.4

Bottom-up

A bottom-up approach is the piecing together of systems to give rise to more complex systems, thus making the original systems sub-systems of the emergent system.

Complex Adaptive Systems

Coined by John Holland and Gell-Mann of the Santa Fe Institute, Complex Adaptive Systems (CAS) depend on ‘extensive interactions, the aggregation of diverse elements, and adaption or learning’.5 This can be shown in the collective intelligence of biological systems such as a colony of ants or termites, slime mould, flocking birds or a school of fish. It can also be demonstrated in non-biological systems such as the internet, the stock market or even cities. What becomes clear in these complex adaptive systems is that complex patterns emerge from remarkably few ingredients. So, although unpredictable outcomes may emerge, the results are intrinsically connected through the rules that govern them.

Darwinian evolution

Up until the latter half of the 19th-century, it was believed by many that the earth and nature were a product of a Divine Architect.6 Moreover, ‘it was assumed that creatures start life as miniature but fully formed versions of their adult selves, and just grow bigger’.7 It was not until Charles Darwin’s acclaimed book, ‘The origin of species’8 published in 1859 that this notion was challenged.

Darwin proposed a theory of evolution based on random mutation and natural selection, which explained how apparent ‘design’ might arise in nature without a designer. He argued that species of life have evolved over time from common ancestors through the process of natural selection. As Flake 9 claims of Darwin’s work, ‘never have so many natural phenomena been explained by so few facts.’

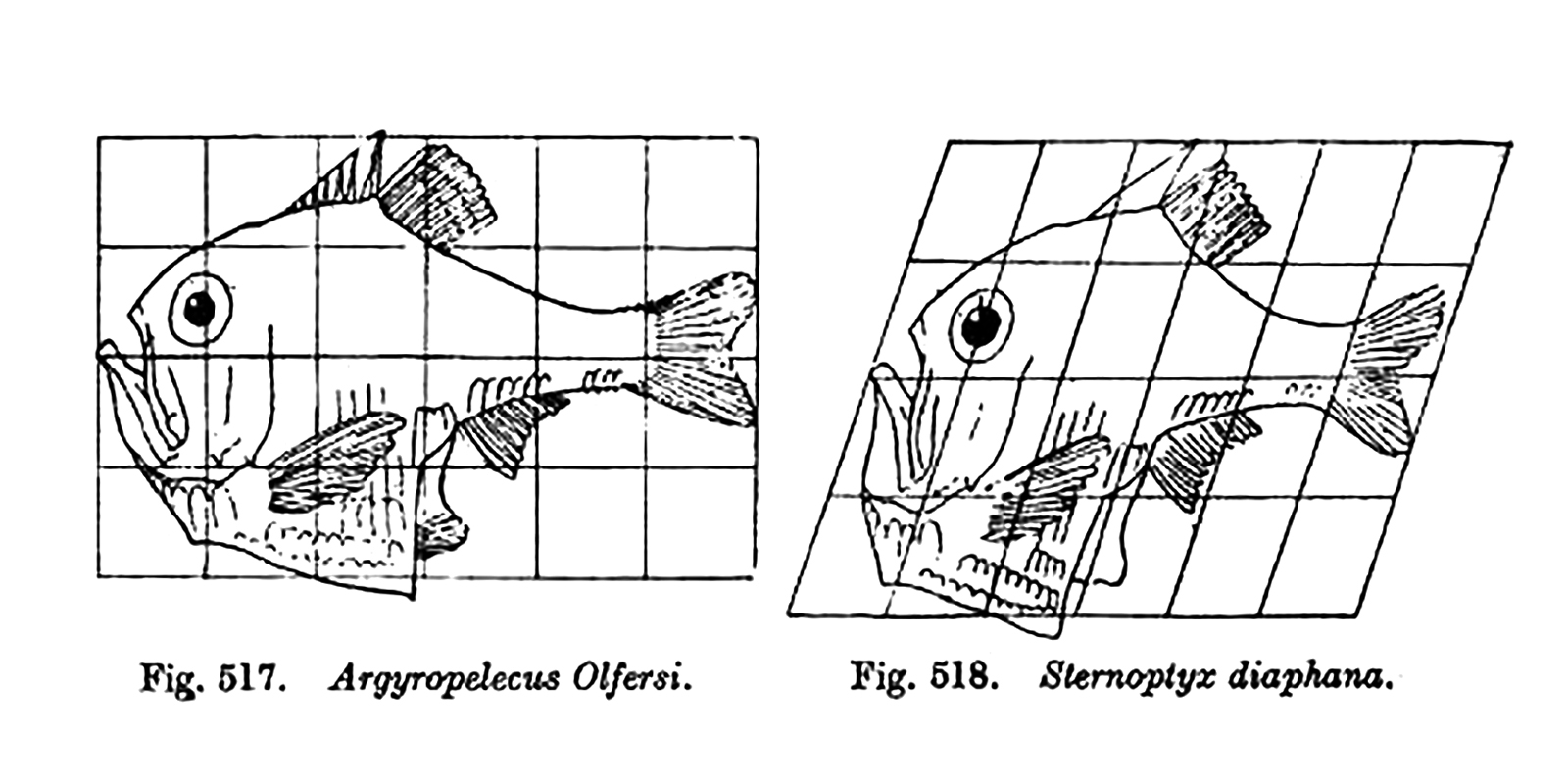

However, the notion of Darwinian evolution was not entirely satisfying in that it was essentially a narrative, not an explanation. It said nothing about the mechanism, or in other words, it was a narrative with no details. It was not until D’Arcy Wentworth Thompson’s ‘On Growth and Form’, published in 1917 that the mechanics were addressed. (See Morphodynamic).

Emergence

Emergence occurs when ‘the whole is greater than the sum of the parts,’ meaning the whole has properties its parts do not have. These properties come about because of interactions among the parts.

Evo-Devo

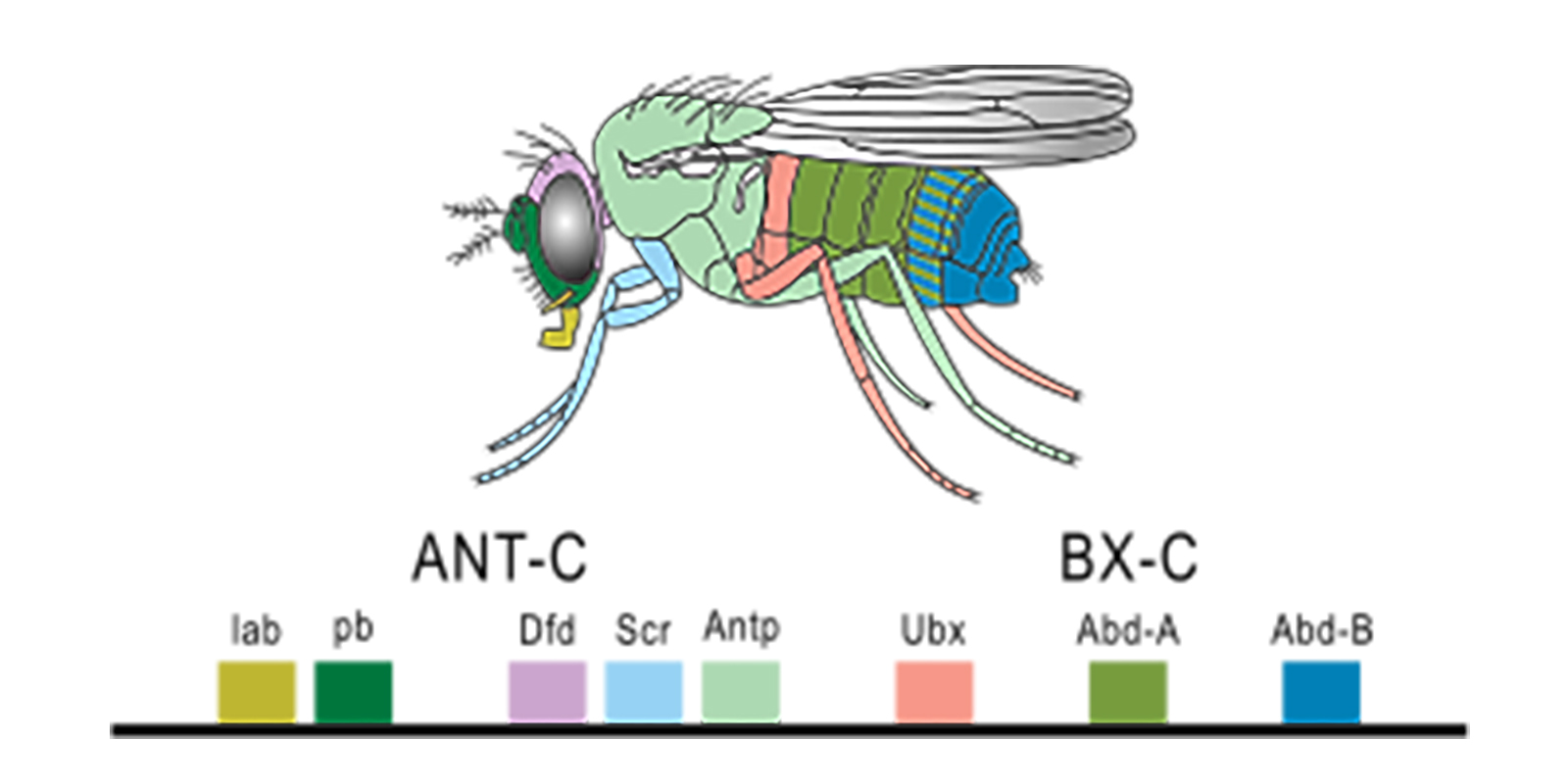

Evolutionary developmental biology, dubbed Evo-Devo for short, are revealing a great deal about the invisible genes and some simple rules that shape animal form and evolution.10 Carroll claims that Evo-Devo can be considered the third major act in a continuing evolutionary synthesis – illustrating how changes in development and genes are the basis of evolution.

Through the study of fruit fly Drosophila melanogaster, geneticists are showing that morphogenesis is a process of gradual elaboration. This is not a process of spontaneous, arbitrary patterning, but rather through careful subdivision via the so-called ‘homeotic’ gene. As Ball 11 elaborates, when the DNA sequences of homeotic genes were examined for various mutations of fruit flies, it was found that all of them contained a short stretch of DNA that was identical. This segment was named ‘homeobox’ and the genes containing it were dubbed ‘Hox’ genes.

Hox genes have also been identified in humans and other mammals which imply that Hox genes are a rather ancient genetic element that determines body plans. By simply turning genetic dials and throwing genetic switches, the Hox genes control the organising logic of cellular differentiation from egg to adult.12 However, it is important to note that these genes are not the drivers of evolution. The genetic tool kit represents possibility – realisation of its potential is ecologically driven.13

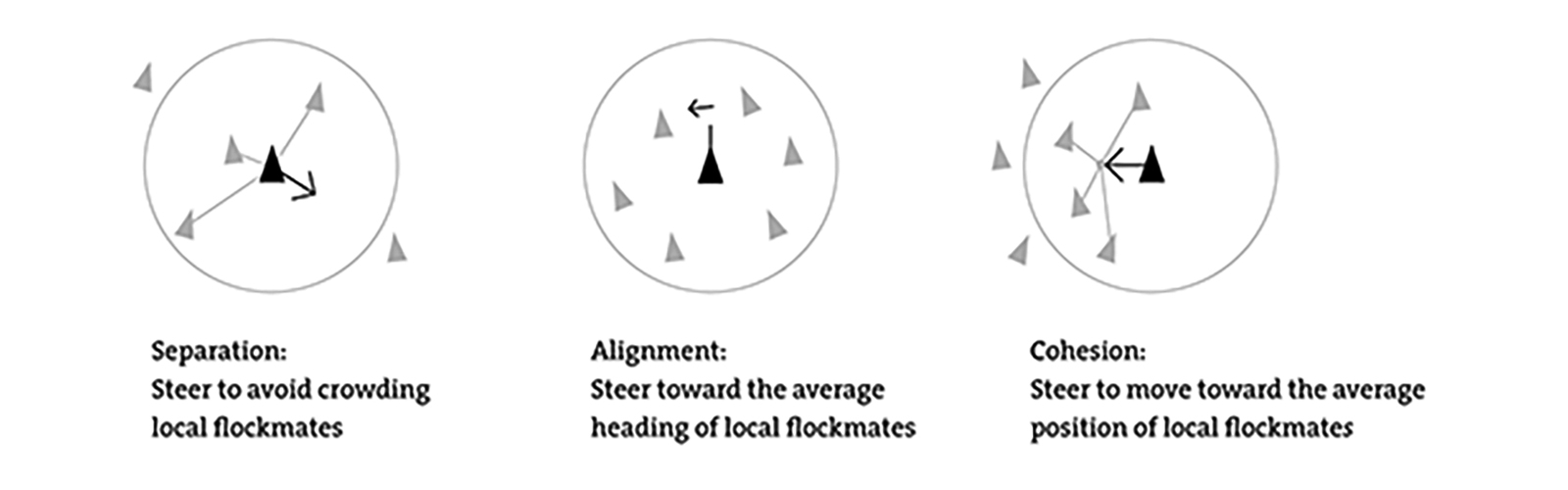

Flocking (behaviour)

Flocking is the collective motion of a large number of self-propelled entities and is a collective animal behaviour exhibited by many living beings such as birds, fish, bacteria, and insects. It is considered an emergent behaviour arising from simple rules that are followed by individuals and does not involve any central coordination. Flocking behaviour was simulated on a computer in 1987 by Craig Reynolds with his simulation program, Boids. This program simulates simple agents (boids) that are allowed to move according to a set of three basic rules:

Morphodynamic

Morphodynamic is an organism’s response to external influences. Its origin is from D’Arcy Wentworth Thompson’s ‘On Growth and Form’,14 published in 1917, which was the first book to provide a formal analysis of pattern and form in nature. Thompson argued that biologists were overemphasising evolution as the fundamental determinant of form and under emphasising the roles of the physical laws and mechanics. He insisted that there were many forms in the natural world, such as the spiral horns of a Dall sheep, that were shaped not by evolution, but as a direct consequence of the conditions of growth or the forces in the environment’.15

Morphogenetic

Morphogenetic is synonymous with Bottom-up. Derived from the Greek terms ‘morphe’ (shape/form) and genesis (creation), morphogenesis was initially used in the realm of biological sciences to refer to ‘the logic of form generation and pattern-making in an organism through processes of growth and differentiation’.16 Morphogenetic form is therefore not predefined but emerges from the rules that define it.

While this may seem like an abstract concept, it can be applied to more tangible applications. For example, we can use the biological principle of stigmergy to generate programmatic relationships and adjacencies. Here agents are leaving a ‘virtual trace’ which influences other agents – both positively and negatively.

Non-linear

Non-linear systems cannot be explained simply through an understanding of their parts, because their primary behaviours – their qualities – represent properties of interactions between parts.

Top-down

In a top-down approach, an overview of the system is formulated, specifying, but not detailing, any first-level subsystems. Each subsystem is then refined in yet greater detail, sometimes in many additional subsystem levels, until the entire specification is reduced to base elements.

Computation

Brute force

A brute force search is a very general problem-solving technique that consists of systematically enumerating all possible candidates for the solution and checking whether each candidate satisfies the problem’s statement. While a brute force search is simple to implement, and will always find a solution if it exists, its cost is proportional to the number of candidate solutions – which in many practical problems tends to grow very quickly as the size of the problem increases. Therefore, a brute force search is typically used when the problem size is limited, or when there are problem-specific heuristics that can be used to reduce the set of candidate solutions to a manageable size. The method is also used when the simplicity of implementation is more important than speed.

The animation below shows all the possible combinations of apartments for a given floor plate of 400sqm. Three sliders are established which represent the number of apartments per floor for that particular apartment type. Assuming there will be a maximum of 10 apartment types per floor, the slider can be set to have a minimum value of 0, and a maximum value of 10. There are, therefore, 11 possible states of this genome. Since there are three sliders, one for each apartment type, this equates to 1,331 possible combinations.

Dimensionality reduction

Dimensionality reduction is a method for decreasing the number of input attributions. By reducing the number of inputs, it also reduces the complexity.

Fitness

In order for a genetic algorithm to simulate evolution, selection criteria needs to be established to stipulate which agents are to breed. This criterion is termed ‘fitness’ and as a result, how one defines fitness is critical to how a genetic algorithm works.

The philosopher Manuel de Landa claims 17 that in a sense, ‘evolutionary simulations replace design, since artists can use this software to breed new forms rather than specifically design them’. He argues that if the designer uses virtual evolution as a design tool to generate new forms and then uses this information to judge the aesthetic fitness of the outcomes, it transforms the role of the architect into ‘the equivalent of a prize-dog or a race breeder’.18

In this animation, there are two variables, the X-coordinate and the Y-coordinate. Fitness is defined as maximising the Z-coordinate. That is, find the highest point on the fitness landscape. A genetic algorithm is used to find the optimal solution.

Fuzzy logic

Fuzzy logic is a form of many-valued logic in which the truth values of variables may be any real number between 0 and 1. It is employed to handle the concept of partial truth, where the truth value may range between completely true and completely false. By contrast, in Boolean logic, the truth values of variables may only be the integer.

IF temperature IS very cold THEN fan_speed is stopped

IF temperature IS cold THEN fan_speed is slow

IF temperature IS warm THEN fan_speed is moderate

IF temperature IS hot THEN fan_speed is highGenetic algorithm

In the early 1990s, ecologist Thomas Ray of the Sante Fe Institute took the notion of Complex Adaptive Systems one step further by introducing biological evolution into the simulation. His much-acclaimed TIERRA program introduced the notion of a Genetic Algorithm which could simulate ‘the behavior and adaption of a population of candidate solutions over time as generations are created, tested, and selected through repetitive mating and mutation’.19

This simulation runs the same apartment combination generator script that we saw previously with brute force, but this time using a genetic algorithm to arrive at an optimal solution.

If-then rules

If-then rules are used to formulate the conditional statements.

Pareto optimality

Steadman 20 proposes that multiple fitness criteria can be combined if the principle of Pareto optimality is introduced. Borrowed from classical economics, a solution is Pareto optimal if performance cannot be improved on any one criterion without worsening performance of others.

Recursion

Recursion is the process a procedure goes through when one of the steps of the procedure involves invoking the procedure itself. A procedure that goes through recursion is said to be ‘recursive’. To understand recursion, one must recognise the distinction between a procedure and the running of a procedure. A procedure is a set of steps based on a set of rules. The running of a procedure involves following the rules and performing the steps.

Solution space

The solution space is all possible candidates for the solution. (Refer Brute Force).

Process

Algorithmic

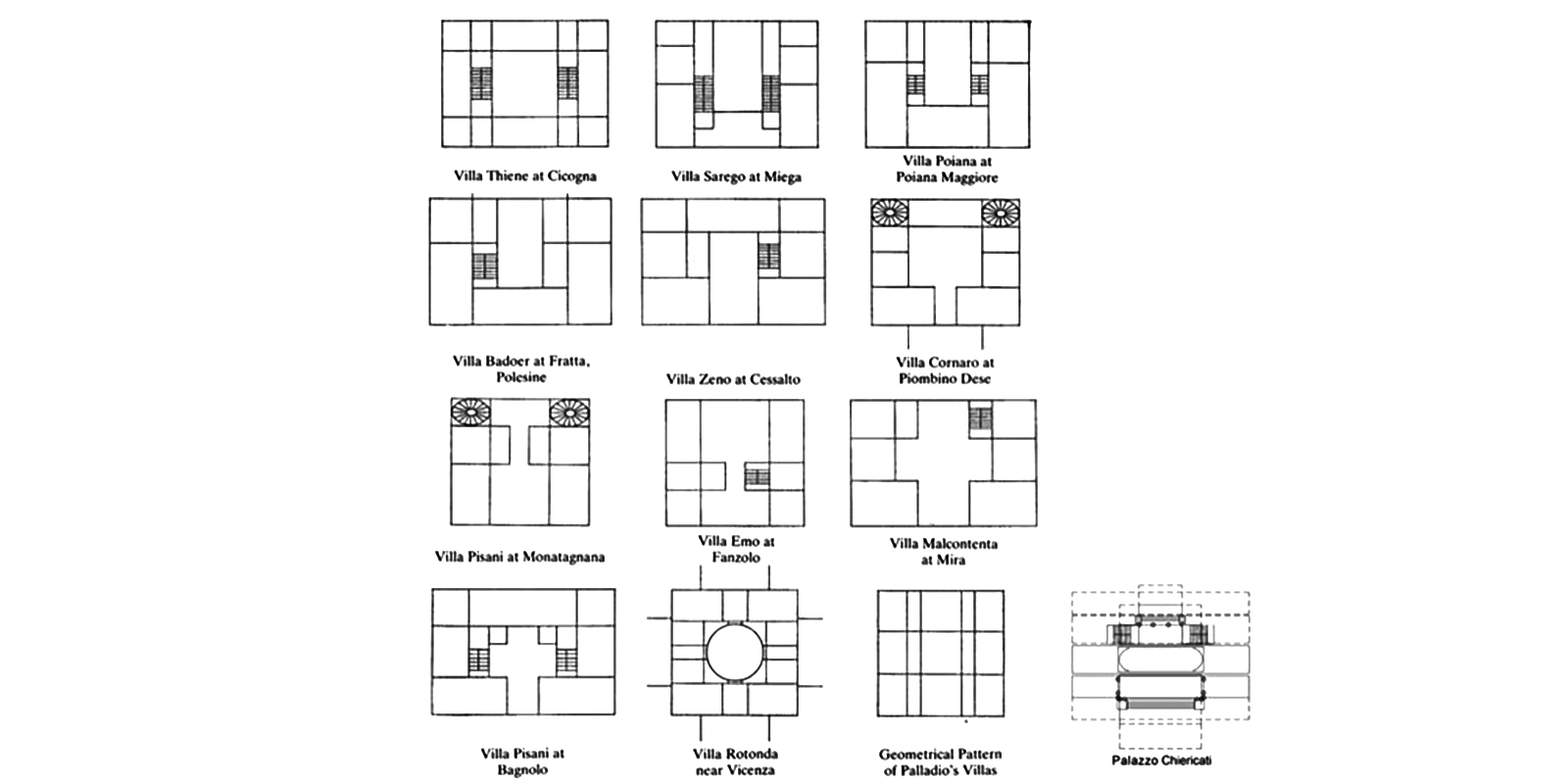

In its most general sense, an algorithm is a process of addressing a problem in a finite number of steps. It can be an articulation of either ‘a strategic plan for solving a known problem or a stochastic search towards possible solutions to a partially known problem’.21 Algorithms are expressed in terms of mathematical equations which define the rules of the model.

Contrary to its digital connotations, it could be argued that algorithms have been used implicitly in architecture for centuries prior to the digital age. Terzidis 22 postulates that while the algorithm is often associated with computer science, the use of instructions, commands or rules in architecture are, in essence, algorithms. Hersey and Freedam 23 for instance, were able to ‘detect, extract, and formulate rigorous geometric rules’24 by which Palladio conceived his villas.

Data analysis

Data analysis uses computational methods for extracting information from large amounts of data. Although it differs from data mining, these two words are often used interchangeably. However, data mining uses machine learning and is more data-driven.

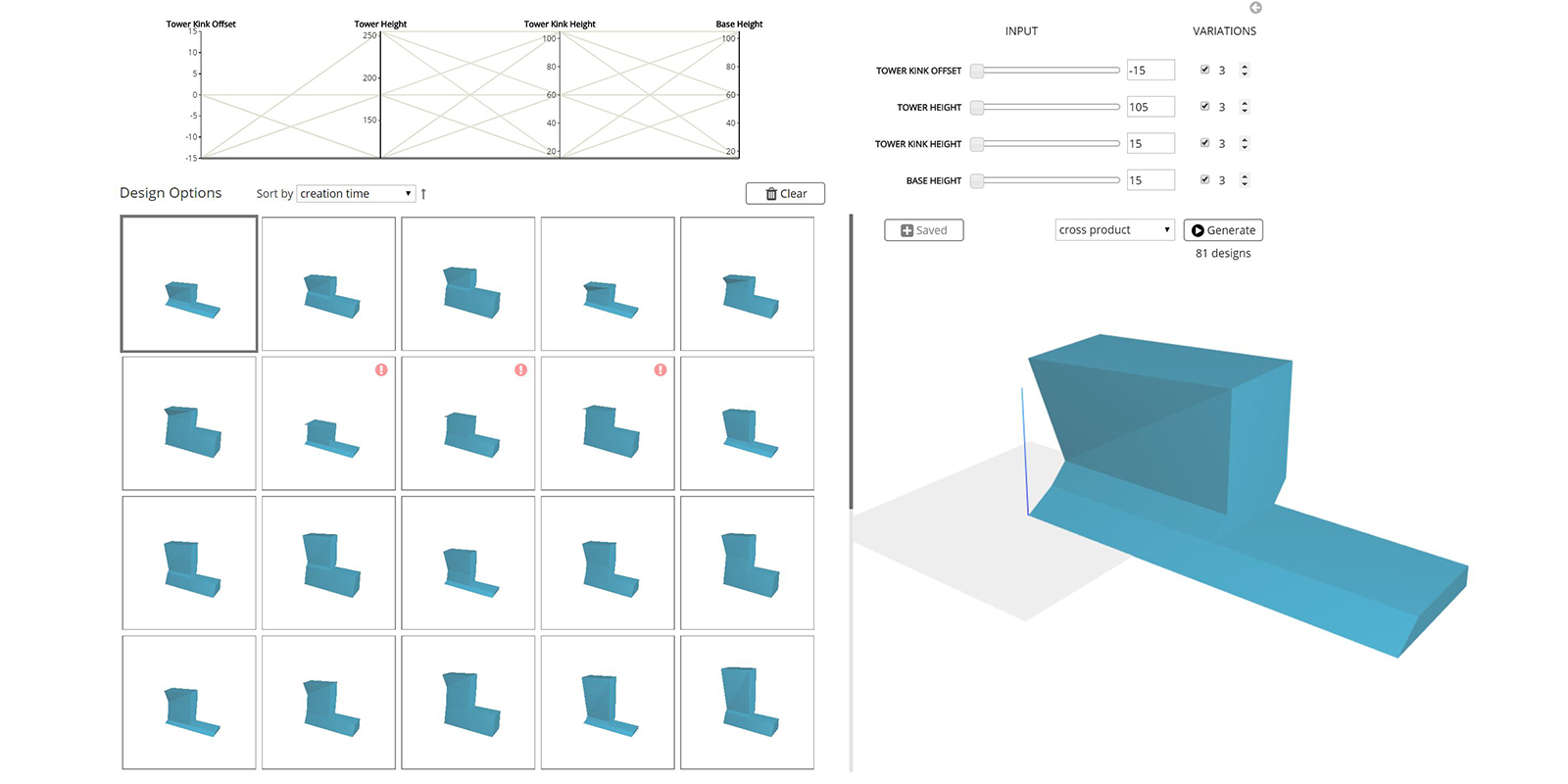

Generative design

Generative design is a form-finding process which starts with design goals (fitness) and then explores innumerable possible permutations of a solution to find the best option. It can work on geometry.

It can also work on data. In an earlier example demonstrating Brute Force, we evaluated apartment mixes on a level by level basis. However, other parameters must also be addressed, such as the overall apartment mix. In order to do this, every level must be taken into consideration. In this scenario, there were 20 stories in this building with 20 possible apartment mixes per floor; this would equate to 100 quintillion possible solutions if the brute force methodology is adopted. This is obviously not viable due to the time it would take to compute all possibilities. This animation shows how Galapagos, an evolutionary solver within Grasshopper, can find an optimal solution significantly faster. The final result is a mathematically tested feasibility study that can be used as the basis to generate form.

Parametric (modelling)

Parametric modelling is the creation of a digital model based on a series of pre-programmed rules or algorithms known as ‘parameters’. That is, the model, or elements of it are generated automatically by internal logic arguments rather than by being manually manipulated.

Typically, parametric rules create relationships between different elements of the design. For example, a rule might be created to ensure that walls must start at floor level and reach the underside of the ceiling. Then if the floor to ceiling height is changed, the walls will automatically adjust to suit.

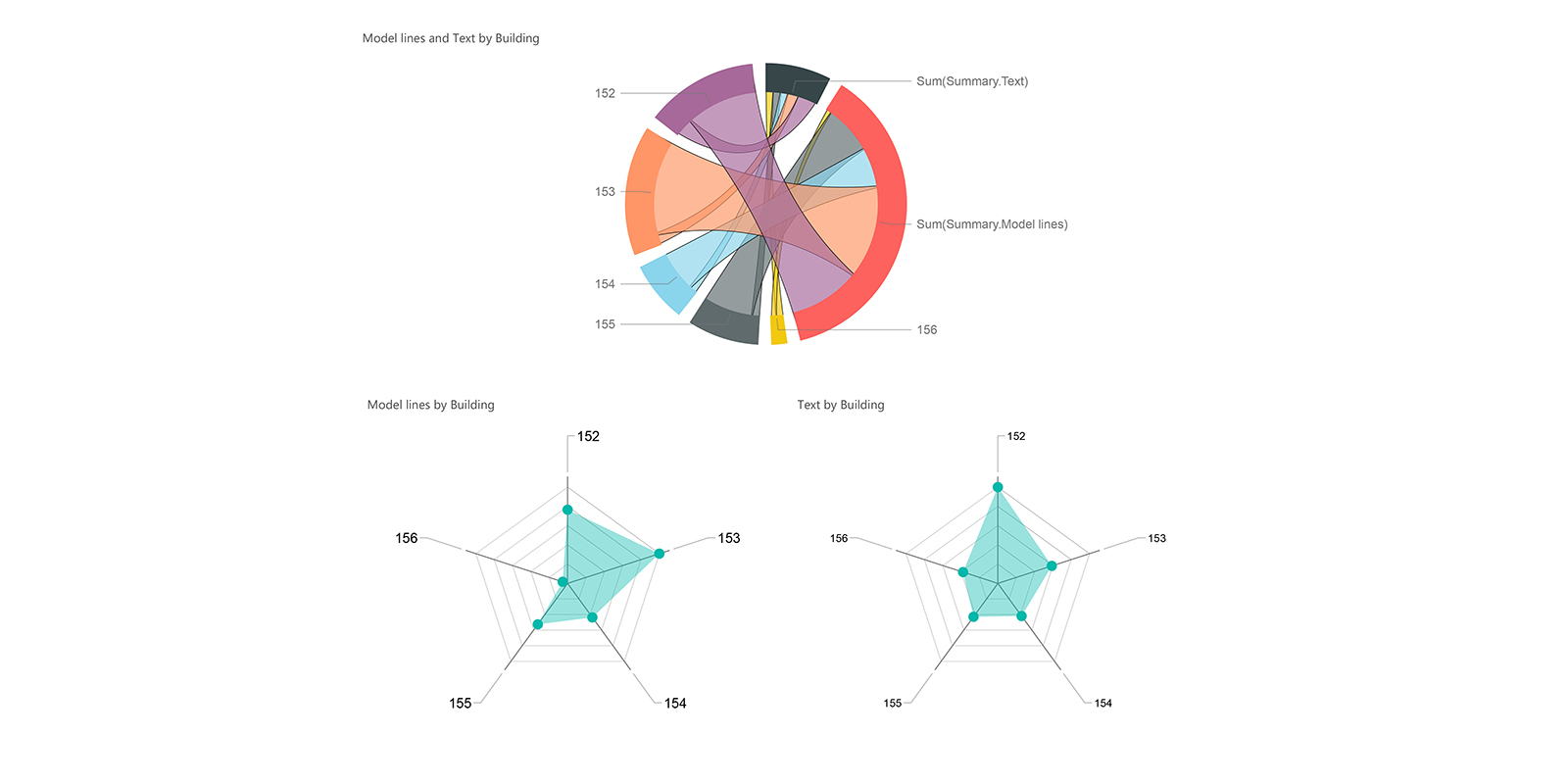

Parametric BIM

Parametric BIM is a relatively new term which describes the convergence of computation design and BIM. Unlike computational design which works with abstract geometries, Parametric BIM engages directly with the BIM model to generate, extract and manipulate data.

It can also be used to perform analyses and embedded the results directly into the BIM model.

Parametricism

Parametricism is a style within contemporary avant-garde architecture, promoted as a successor to post-modern architecture and modern architecture. The term was coined in 2008 by Patrik Schumacher.25 26

Fabrication

Digital Fabrication

Digital fabrication uses design-to-fabrication workflows to enable a faster construction process, minimise resources, and use material-specific design solutions. Nervous System’s Kinematics Link dress, for example, is a mass customised, 3D printed dress. Composed of thousands of unique interlocking components, each dress is 3D printed as a single folded piece and requires no assembly. However, the dress was too large for the 3D printer. So what to do? By folding the garments prior to printing them, the designers were able to make a complex structure larger than the constraints of the 3D printer. The simulation uses rigid body physics to accurately model the behaviour of the structures allowing 85% compression. The dress requires no assembly. What I love about this project, is that it represents a new approach to manufacturing which tightly integrates design, simulation, and digital fabrication to create complex, customized products using ubiquitous manufacturing hardware.

Mass customisation

Mass customization is the use of flexible computer-aided manufacturing systems to produce custom output. Such systems combine the low unit costs of mass production processes with the flexibility of individual customization. Thus, standardisation does not deliver any economy of scale in a digital design and fabrication workflow.

The early pioneers of mass customisation argued that digital design and fabrication should not be used to emulate mechanical mass production but to do something else – something that industrial assembly lines cannot do.27 This gave rise to the reintroduction of ornamentation into architecture. Carpo explains that since the beginning of modern times, Western architecture has developed a systematic theory of ornament as supplement: Something which is added on top of an object or a building, and which can be taken away if necessary.28 However, in the age of mass customisation, decoration is no longer an addition; ornament is no longer a supplemental expense.

Mass production

Mass production is the production of large amounts of standardised products. In mass production, materials are designed to be as much as possible, homogeneous and isotropic, and compliant with industrial standards.

Material feedback

Material feedback allows for the adjusting of digital fabrication in order to negotiate material properties and imprecisions. Eladio Dieste’s particular approach to the design of buildings redefines ideas of materiality in architecture. Through the malleability of the surface, he was able to calibrate a precise relation between the whole and the individual unit of construction, ultimately turning the design process into a problem of material logic.

This research presents the initial developments of a method to train an adaptive robotic system for subtractive manufacturing with timber. The methods were evaluated in a series of tests where the trained networks were successfully used to predict fabrication parameters for simple cutting operations with chisels and gouges.

Robotic fabrication

The initial pursuits to integrate robotic technologies into architecture are best described by the term ‘construction automation’.29 However, the early pioneers of mass customisation argued that digital design and fabrication should not be used to emulate mechanical mass production but to do something else – something that industrial assembly lines cannot do.

The video below explored the architectural potentials of robotic sewing of bespoke timber veneer laminates in combination with elastic bending. Although modern timber fabrication technology allows the material to be shaped into a variety of shapes, products and dimensions, the inherent material characteristics of timber are mostly neglected or even suppressed in the design and fabrication process. Yet, timber exhibits excellent mechanical behaviour and high potentials for textile and multi-material connections outside the scope of conventional timber connections.

References

1 Morphocode. Intro into L-Systems.

2 Leach, N. (2009, Jan/Feb). Digital Morphogenesis in Architectural Design: Theoretical Meltdown, Wiley, Chichester, pp. 32-37.

3 Frazer, J. (1995). A Natural Model for Architecture in An Evolutionary Architecture. AA Press, London, p. 10.

4 Steadman, P. (2008). Afterword: Developments Since 1980 in The Evolution of Designs: Biological Analogy in Architecture and the Applied Arts. Routledge, London, pp. 237-270.

5 Holland, J. (1996). Hidden order: How Adaptation Builds Complexity. Basic Books, New York, p. 4.

6 Ball, P. (2009). Nature’s patterns: A Tapestry in Three Parts. Oxford University Press, Oxford, pp. 7-8.

7 Ball, P. (2009). Nature’s patterns: A Tapestry in Three Parts. Oxford University Press, Oxford, p. 260.

8 Darwin, C. (1859). The Origin of Species. John Murray, London.

9 Flake, G. W. (1998). Genetics and Evolution in The Computational Beauty of Nature. MIT Press, Cambridge, pp. 339-360.

10 Carroll, S. (2007). Endless Forms Most Beautiful: The New Science of Evo Devo and the Making of the Animal Kingdom. Phoenix, London, p. X.

11 Ball, P. (2009). Nature’s Patterns: A Tapestry in Three Parts. Oxford University Press, Oxford, pp. 274-275.

12 Ball, P. (2009). Nature’s Patterns: A Tapestry in Three Parts. Oxford University Press, Oxford, p. 271.

13 Carroll, S. (2007). Endless Forms Most Beautiful: The New Science of Evo Devo and the Making of the Animal Kingdom. Phoenix, London, p. 286.

14 Thompson, D. (1992). On Growth and Form. Cambridge University Press, New York.

15 Ball, P. (2009). Nature’s Patterns: A Tapestry in Three Parts. Oxford University Press, Oxford, p. 12.

16 Leach, N. (2009, Jan/Feb). Digital Morphogenesis in Architectural Design: Theoretical Meltdown, Wiley, Chichester, pp. 32-37.

17 De Landa, M. (2002). Deleuze and the Use of the Genetic Algorithm in Architecture. In Architectural Design: Contemporary Techniques in Architecture, Wiley, Chichester, pp. 9-12.

18 De Landa, M. (2002). Deleuze and the Use of the Genetic Algorithm in Architecture. In Architectural Design: Contemporary Techniques in Architecture, Wiley, Chichester, pp. 9-12.

19 Terzidis, K. (2006). Algorithmic Architecture. Elsevier, Oxford, p. 19.

20 Steadman, P. (2008). Afterword: Developments Since 1980. In The Evolution of Designs: Biological Analogy in Architecture and the Applied Arts. Routledge, London, pp. 237-270.

21 Terzidis, K. (2006). Algorithmic Architecture. Elsevier, Oxford, p. 15.

22 Terzidis, K. (2006). Algorithmic Architecture. Elsevier, Oxford, p. 39.

23 Hersey, G., & Freedman, R. (1992). Possible Palladian Villas: (Plus a few instructively impossible ones). MIT Press, Cambridge.

24 Terzidis, K. (2006). Algorithmic Architecture. Elsevier, Oxford, p. 21.

25 Schumacher, P. (2009). Parametricism: A New Global Style for Architecture and Urban Design. In Architectural Design: Digital Cities, Leach, N. (ed). Wiley, Chichester, pp. 14-23.

26 Schumacher, P. (2016). Architectural Design: Parametricism 2.0. John Wiley & Sons, London.

27 Carpo, M. (2017). The Second Digital Turn: Design Beyond Intelligence. The MIT Press, Cambridge, p.3.

28 Carpo, M. (2017). The Second Digital Turn: Design Beyond Intelligence. The MIT Press, Cambridge, p. 79.

29 Bechthold, M. (2013). Design Robotics: New Strategies for Material System research. In Inside Smartgeometry: Expanding the Architectural Possibilities of Computational design, Peters, B. & Peters, T. (eds). Wiley, Chichester, pp. 254-265.