Building Information Modelling (BIM) entails interdependencies between technological, process and organisational/cultural aspects. These mutual dependencies have created a BIM ecosystem in which BIM-related products form a complex network of interactions.¹ For a long time, interoperability between these products has been virtually non-existent, resulting in users unwilling to interchange between different software platforms. Rather than using the best product for the job, users have preferred to remain in the software that they are most familiar with, possibly to the detriment of the design. One such example of this is conceptual massing within Autodesk Revit. This post explores how to extend Revit’s modelling capabilities by combining it with McNeel’s Rhinoceros.

A software ecosystem

It is important to emphasise that BIM is an ecosystem and that there is no single software that can do everything. Within the graphic design industry, Adobe has recognised this and has produced a suite of software including; Photoshop, Illustrator and InDesign, amongst others. Each software has a very clear and well-defined scope. Photoshop is used for raster images, Illustrator for vector images and InDesign to compile it all together. Each software is separate but linked together in the workflow. In general, architects are accepting of this ecosystem and are agile enough to move between each platform. However, this ethos of a software ecosystem needs to be applied to BIM software as well.

Different BIMs for different purposes

The term ‘BIM’ can mean different things to different people. Despite BIM bridging across the entire project from conception through to facilities management, depending on who you talk to, different people will have different biases. For example, as an Architect, I am naturally more interested in geometry and the design authoring phase of BIM. These biases, which are epidemic in the AEC industry, is elaborated on in Antony McPhee’s post, ‘Different BIMs for different purposes‘.

With so many perspectives on what BIM is, one needs to question the role of a BIM Manager. Dominik Holzer, is his RTC Australasia presentation, ‘You are a BIM Manager – Really?’ discusses how BIM managers need to go beyond tool knowledge and develop management acumen. But that can be a hard thing to accomplish. Even if we focus on a particular BIM bias, say Design Authoring, there are a plethora of tools out there that a BIM manager must master, or at the very least, have an appreciation of. Here is just a few of them.

Most of the software mentioned above is generally accepted as part of a BIM workflow. Why then are so many people resistant to creating a federated model and instead insist that everything must be created within Autodesk Revit? BIM is bigger than just a single piece of software, and we must therefore seriously consider interoperability in our workflows.

Revit’s conceptual modelling limitations

Although Autodesk Revit is often chosen as the primary documenting tool in many architectural offices, it is widely acknowledged that Revit is extremely limited in dealing with complex conceptual modelling. Autodesk’s attempts at introducing a dedicated conceptual modelling environment in the form of FormIt and Inventor haven’t taken hold of as of yet. As a workaround, many offices have adopted McNeel’s Rhinoceros, or Rhino for short, for the conceptual design phase and Autodesk Revit for the development and documentation phases.

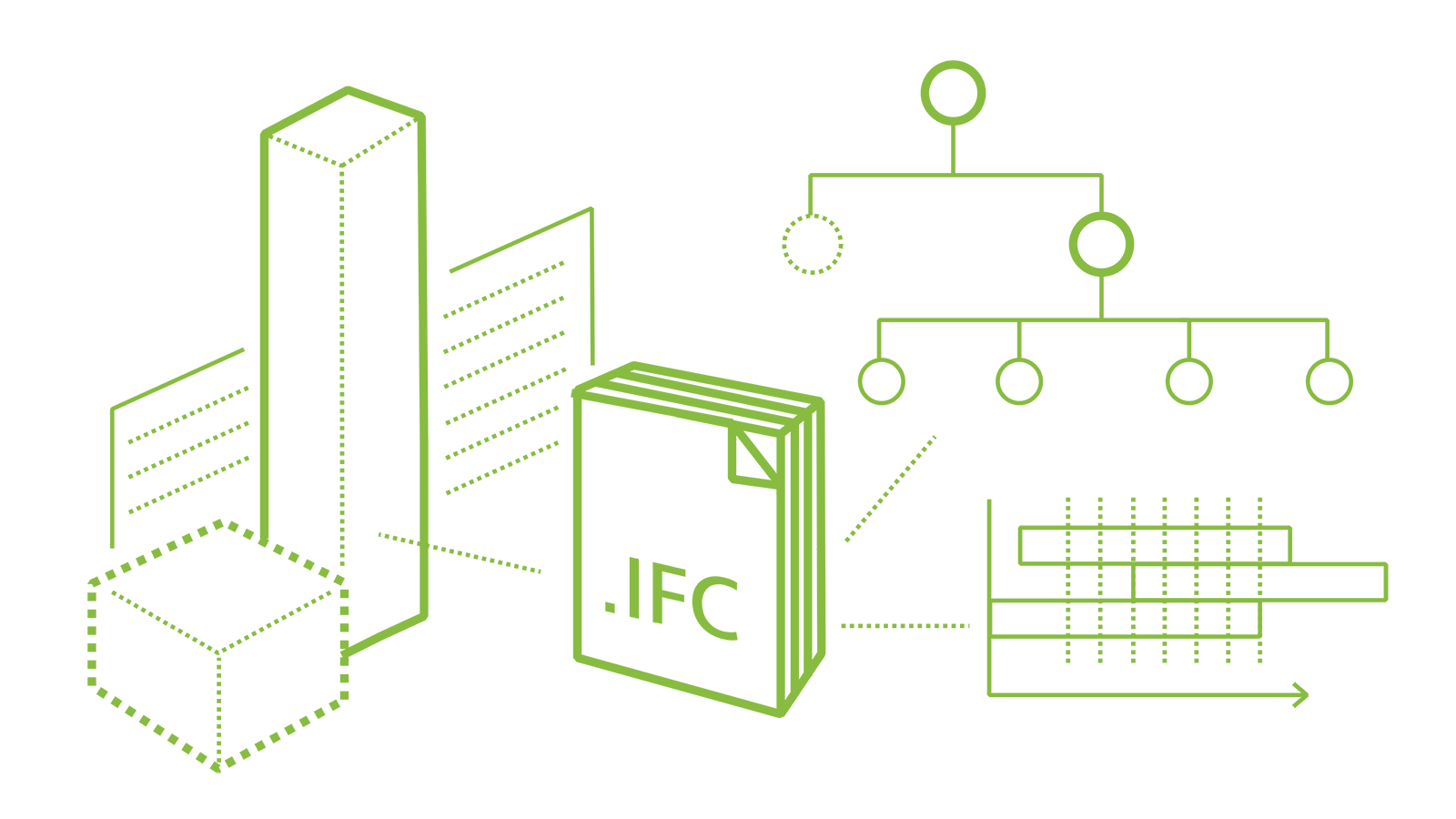

The integration between McNeel’s Rhino and Autodesk’s Revit in the past has proven to be a challenge for many designers looking to combine freeform geometry with BIM. These interoperability barriers are partly due to Revit’s API limitations and also, arguably, a lack of development of the Industry Foundation Class (IFC) file format, which promised universal interoperability within the AEC industry.

Cultural barriers

The other barrier is one of culture and perception. Rhino is sometimes regarded as useful for conceptual modelling only, whereas Revit is preferred for documentation. Over and over, I see these two worlds failing to collaborate. The scenario generally looks something like this: Young, recent graduates who are relatively computational literate, spend long hours at the office to win a competition. These people then jump onto other projects, and if the competition is awarded, a new team is put together to deliver the project. This will most likely contain more experienced architects more attuned to developing and delivering the project. The Rhino model is thrown out and completely rebuilt from scratch within Revit. Sound familiar?

The problem with this approach is that it, more often than not, involves losing much of the intelligence built into the original design. And since Revit is unable to recreate complex geometry accurately, the design needs to be dumbed down to comply with Revit’s limitations. This methodology couldn’t be further from what BIM set outs out to achieve. So what is the solution?

For some, the solution is simply to produce everything natively within Revit from the beginning. However, as shown in this case study, Revit sometimes simply isn’t capable of creating the desired forms. One, therefore, needs to look to other software that can, such as Rhino, but this too can have limitations.

Rhino to Revit limitations

Those that have attempted to use Rhino geometry within Revit will be all too familiar with the difficulty in successfully achieving this. The most basic method is to simply export a *sat file from Rhino and then import it into Revit. Best practice is typically to do this via a conceptual mass family. However, the result can be called ‘dumb geometry’ in that it is unable to be edited once imported, which is far from ideal in a BIM environment.

The next progression in usability is to explode the import instance. Depending on the base geometry, Revit may make editing the mass accessible via grips (push/pull arrows), but this is not always the case. However, the best method to generate native Revit elements from Rhino is to use the wall-by-face, roof-by-face, curtain system and/or mass floors commands. These elements will be hosted to the Rhino geometry. In theory, these elements can be updated if the base geometry changes, but experience has shown that Revit is not always able to re-discover the host element, and new elements will need to be created. Therefore, it is prudent to test the Rhino to Revit workflow before investing too much time in embellishing the Revit elements.

The whole process of using Rhino geometry in Revit feels a bit like black magic. Often, the first attempt in importing the geometry will fail, and there will be no guidance from Revit as to why it failed. This can prove to be very frustrating, and many users will simply give up. However, if these best practices are followed when generating the mass in Rhino, integration with Revit should be seamless, or at the very least, less painful.

Interoperability via Dynamo

The best practice mentioned above all rely on more or less the same techniques once in Revit: wall-by-face, roof-by-face, curtain system or mass floors. In other words, a base mass needs to be provided to host the element. But what if you want to create other elements which aren’t roofs, floors or walls? This is where third-party plug-ins come into play. With the introduction of Dynamo, a whole new world of possibilities has emerged to facilitate interoperability. To assist Rhino users in becoming acquainted with Dynamo, I have produced a ‘Dynamo for Grasshopper Users‘ primer. Since everything is a little different in Dynamo, the primer provides a list of ‘translations’ to find a comparable node/component.

Conclusion

The post presents the notion of a BIM ecosystem and encourages an open and agile workflow amongst the AEC profession. To illustrate this notion, the post explored in detail how to extend Revit’s modelling capabilities by combining it with Rhino and Dynamo to create an integrated BIM environment. It is hoped that through greater awareness of all software’s strengths and weaknesses, BIM professionals can use the right tool for the job, rather than being constrained to a single software.

References

1 Gu, N. et al. (2016). BIM Ecosystem: The co-evolution of products, process and people.

19 Comments

Konrad

I like the write up but I noticed at least a few mistakes and inaccuracies. I would point them out if you are willing to make corrections. Cheers!

paulwintour

Ha. Yes of course. No need to ask. Correct away

gester

very funny: revit limitations are being listed, but not a word is being spoken about the conceptual designing in a tool where it does work: nemetschek’s vectorworks.

it has an integrated massing model functionality (with sketchup’s push-pull as solid, not surface modelling), with the automatic generation of the floor plans, the parametric designing with the integrated marionette module (which is grasshopper pure), and the 3d modelling, additionally to the own parasolid functionality, via the subdivision module, resembling rhino and inventor.

add to it the integrated space programming ability (resembling this time trelligence affinity), and you have a perfect macro bim application.

add to it the perfect ifc integration, and you don’t have to mention revit at all.

paulwintour

I think you have missed the point of the post. I have no doubt that Vectorworks is a good program. I’m sure ArchiCAD is also good. I wouldn’t know because I’ve only used Revit. But the point is, regardless of which design authoring program you use, it can’t do everything. And why would you want it to do everything half baked, when there are so many specialist programs out there.

The dominance of proprietary tools and a lack of true interoperability is a hot topic at the moment. Last week Autodesk and Trimble announced an agreement to improve interoperability but there are questions whether this is just for show or if real change will come about. For too long we have been arguing about which is the better program X or Y. It doesn’t really matter as long as its connected, hence the ecosystem. The conceptual massing example above was just one example highlighting why interoperability is important – at least for Revit. I’m sure all software, including Vectorworks, has its weaknesses.

You may want to have a read of some of these other posts too:

https://provingground.io/2016/06/21/the-wicked-problem-of-interoperability/

http://blog.areo.io/bim-interoperability/

gester

my concern is the real interoperability, i.e. using open formats, and not the heavyweight native file type.

there is one thing that is being missed in the post – it’s the notion of the workflow between the professionals working on a project. they exchange the geometry data as a reference of their contribution, then they work out the improvements based on the feedback brought in another open format. there is no round-tripping of geometry data.

autodesk, instead of focusing on the open formats, still try to use the native ones, as the recent deal with trimble indicates. there is no secret that the relationship autodesk-ifc is not an ideal one, and it doesn’t change. they simply still try to bridge the path to any part of the design-construction (= ipd) processes with further native cooperations. if we want to follow the mcphee’s ‘different bims for each process step’ then we have to mention solutions that work in an open bim type for certain stages, if we want to study cases.

purchasing a constellation of tools for initial macro bim doesn’t make sense, when there is one solution that works fine, and you don’t mention it. surely vectorworks has its flaws, too, but just for macro bim there is no better strategy. the question is, if the resulting ifc made of solid operations may be imported to revit at all.

paulwintour

I agree that Autodesk hasn’t been doing enough for IFC integration. But what exactly are you arguing – that Vectorworks is the best and we should all use it? So Vectorworks can process large point clouds, offer cloud collaboration platforms, direct to fabrication capabilities, VR capabilities and clash detection? I can publish a book using just Photoshop but that doesn’t mean that is the best approach.

gester

ok,

1. you’ve addressed macro bim (conceptual design and its evaluation). autodesk don’t fit here with their revit and assorted other tools (in spite of your suggestions), and vw is the best solution for this. just this, and nothing more.

2. surely vw can handle large point clouds, and project sharing, but i haven’t mentioned this part of the process at all. my point is that, while speaking of case studies, and advocating open solutions, one should fairly mention things that work the best.

3. revit, and all other bim authoring tools and incapable of the full design to fabrication process, so there is no point in mentioning this, too.

paulwintour

This is a revit based post. I state at the begining that “this post explores how to extend Revit’s modelling capabilities by combining it with McNeel’s Rhinoceros and Dynamo.” Claiming that Vectorworks is the best solution is your opinion only. At no point do I claim that Autodesk or Revit is the best. It fact, I’ve already commented that Autodesk needs to massively improve and I’ve previously posted about this issue in the past (https://parametricmonkey.com/2015/02/01/dynamo-revits-slow-development/).

It’s a futile argument and only serves to further fragment the BIM community. I am simply offering solutions to extend Revit’s capabilities and improve the situation. If you want to push Vectorworks than maybe this isn’t the forum for you. And by the way, Rhino and Grasshopper both work with digital fabrication, integrating with Kuka & HAL robots so if I can connect Revit to Rhino, than I can fabricate my BIM model.

gester

you’re right, delving deeper into autodesk is not my thing, i don’t use any of those products, so it’s really not my forum. i’m an architect, and no rhino fabrication is useful for me, i need real cam products, and working with open formats.

anyway, thanks for your time and attention, keep up your work and good bye.

Andrei

the most pointless argument ive seen in a while. Haha. interesting post by the way

gester

and, btw, is revit cloud collaborating with other vendor products as well? or only with autodesk ones?

Josh Moore

Hi came across your blog and was wondering if I could have permission to use your BIM Ecosystem image in a dynamo presentation I’m doing at AU this year. Of course I will put a link to your blog post as credit. Let me know!

paulwintour

Hi Josh. Yep sure, go for it. Thanks for asking. I’ll be at AU. Maybe we can catch up for a beer. If I can get in on the waitlist I’ll come along to your class.

Jens Majdal Kaarsholm

Great post Paul, I totally agree with you, we shouldn’t limit ourselves to a single software, but rather use the best suitable solutions for the job. And then optimise the workflows between each of them.

You mention the .sat workflow from Rhino to Revit, and the usual struggle getting Rhino perfectly into Revit. On the current project I’m working on, we use a lot of imported geometry from Rhino. And after many different approaches, both with .sat, dynamo etc. we found that AutoCAD 2016 is actually doing the best job as a translation tool. Since you can open native Rhino files directly in AutoCAD 2016, clean them up, relayer things a bit if needed, and export them out as .dwg’s that in most cases will go smoothly into Revit.

Also if you set all your Rhino layers to be “by layer” in AutoCAD, you will be able to see and control the materials in Revit easily from the manage object styles tab. Very useful when using live rendering tools like Enscape etc.

Thought it was worth to mention,

I know the post is half a year old, but I just came across it today. And as I said, great read. Thanks.

paulwintour

Thanks for the tips Jens. I’ll check it out. Is there any benefit in going through AutoCAD at all, as opposed to just exporting out a *dwg direct from Rhino?

Jens Majdal Kaarsholm

Hi Paul,

Yes there is usually a big difference between a dwg that’s been exported directly from Rhino, and a dwg produced by AutoCAD, by opening the native .3dm directly in AutoCAD 2016 and then saving it out as a dwg. from there. As I mentioned in my earlier post, remember to clean, set things to be “by layer” and purge before you bring the .dwg into Revit.

Geometry that usually breaks when you import the direct Rhino .dwg, will in most cases come in perfectly using AutoCAD as the middle man.

But now that 2017 opens Rhino directly this workflow might not be as useful anymore, but I haven’t had much time to test this yet, as my current project is still running 2016.

Trang Nguyen

Hi Paul,

Great article! Just want to ask if I could translate your article into Vietnamese and post it on my blog.

Thank you in advance.

Best,

Trang

paulwintour

Shouldn’t be a problem Trang. What is your blog? Just make sure you credit this article.

Trang Nguyen

Thanks Paul! Sure, I will add a link of your original post as a credit. My blog that I mentioned actually is my LinkedIn https://www.linkedin.com/in/trang-n-nguyen.

Thanks again and keep up good works!