True digital transformation doesn’t mean digitalising old ways of working. It means harnessing technology to do better things. When working with organisations, a significant component of what we do at Parametric Monkey is the process of extracting domain knowledge from clients so that it can be encoded into an algorithm. Known as knowledge elicitation, the aim is to capture knowledge to achieve automation at scale. This article unpacks what we mean by knowledge and why it exists the way it does. We then explore how knowledge elicitation can be achieved in the Architecture, Engineering and Construction (AEC) industry. Finally, the article discusses the technology-based biases holding back organisations from true digital transformation.

I sometimes find, and I am sure you know the feeling, that I simply have too many thoughts and memories crammed into my mind.

Albus Dumbledor in Harry Potter1

Understand AEC’s problems using the 5 whys

Much like referred pain in the human body, the AEC industry’s underlying issues are hidden behind more obvious symptoms. One of the best ways for seeing beyond these symptoms is the ‘5 whys’ technique. Championed by Eric Ries in his book, ‘The Lean Startup’, the process involves asking and answering ‘why’ five times.2 Consider the following thought experiment:

Why are AEC projects so time-consuming? Because they are complex, and we have to wait for input from other stakeholders?

Why do we need input from other stakeholders? Because they are experts in their field, and we need their knowledge and guidance.

Why do we need their knowledge and guidance? Because the knowledge we need resides in their heads which only they have access to.

Why does knowledge reside only in their heads? Because knowledge hasn’t been captured in a scalable format.

Why hasn’t knowledge been captured in a scalable format? Because many professionals believe their knowledge is tacit and not easily articulated.

As this example illustrates, how professionals capture and disseminate knowledge is one of the industry’s core issues. So what exactly do we mean by knowledge? What are the limitations of how it is currently disseminated in the AEC industry? And how can we enable its scalability?

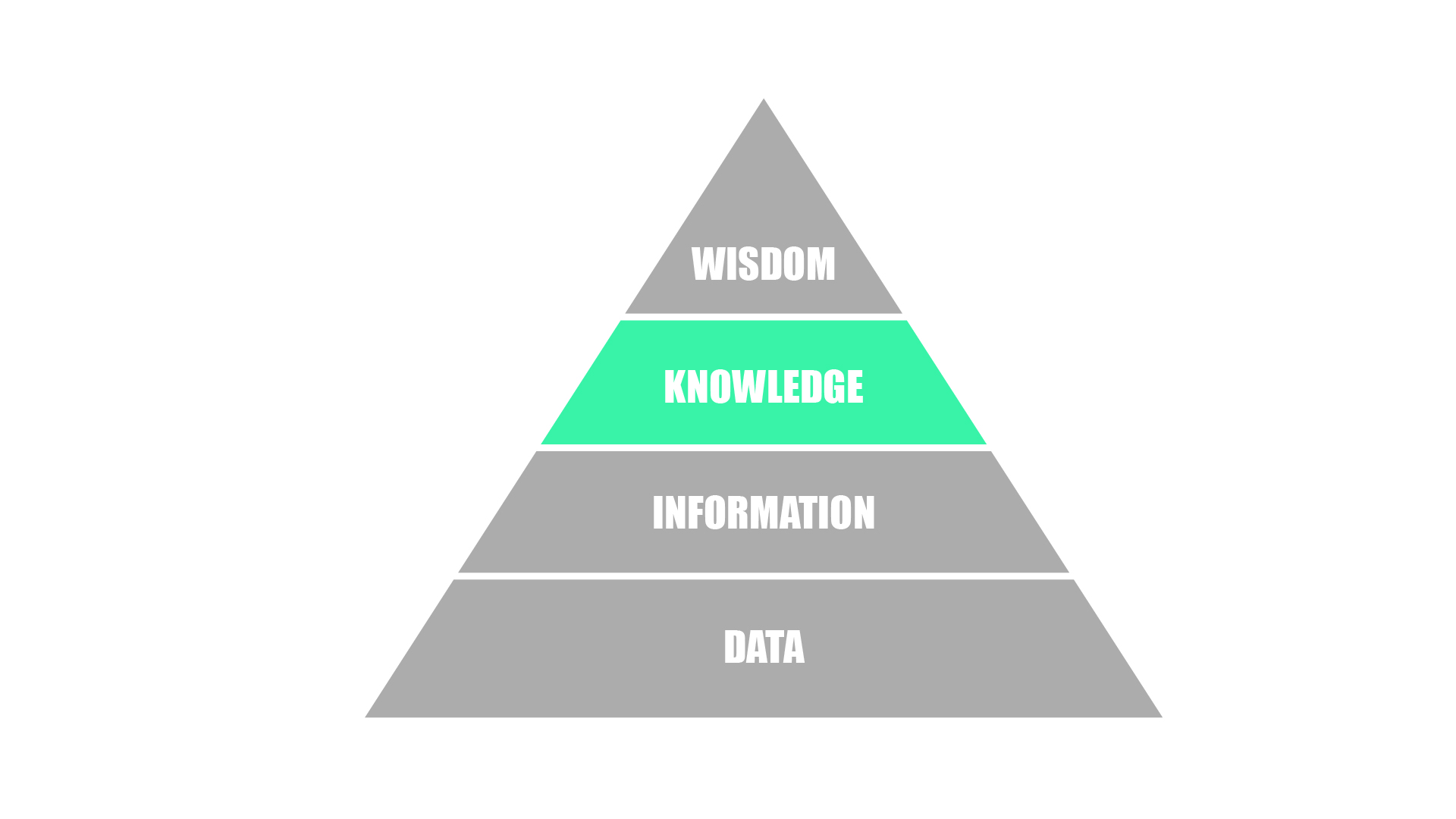

Data, information, knowledge, wisdom

Many readers may be familiar with the ‘data-information-knowledge-wisdom’ (DIKW) pyramid. Popularised in 1988 by Russell Ackoff, a leading organisational theorist, he suggests that while many items may be classified as data, information is differentiated from data in that it is useful – often in the form of organised or structured data. Knowledge, on the other hand, is actionable information. Finally, wisdom refers to evaluated understanding or an appreciation of why.3

While data and information are widely captured and disseminated digitally, knowledge and wisdom have mostly remained distinctly human processes, stored in individual brains and disseminated via books and documents. But this limitation stems not from the nature of knowledge and wisdom itself but rather from the medium in which it has historically been captured and disseminated.

Paper-based knowledge

572 years ago, Johannes Gutenberg invented the printing press, enabling knowledge to be captured and recorded in books. The invention was a marked improvement to orally transmitted information and manually scribed documents as information could now be disseminated far easier. But Gutenberg’s invention did something else; it fundamentally shaped our perception of knowledge and how it should be captured. As David Weinberger describes, “…books are a disconnected, nonconversational, one-way medium… Long-form thinking looks the way it does because books shaped it that way. And because books have been knowledge’s medium, we have thought that that’s how knowledge should be shaped”.4

Digital-based knowledge

Fast forward almost six centuries, and the human race has gone through three industrial revolutions – steam, electricity, digital – and we are currently in the fourth – Artificial Intelligence /Internet of Things. Through each revolution, technology has been at the centre. Yet despite all of the technological advancements, much of how professional knowledge is currently captured remains paper-based.

| 1st industrial revolution | C18th & C19th | Used water and steam power to mechanise production. |

| 2nd industrial revolution | 1870 – 1914 | Used electric power to create mass production. |

| 3rd industrial revolution | 1980s – | Used electronics and information technology to automate production. |

| 4th industrial revolution | 2010s – | Characterised by a fusion of technologies that is blurring the lines between the physical, digital, and biological spheres. |

Paper-based in this context doesn’t necessarily refer to paper as the medium or, in other words, a hard copy. But rather by whom and how the knowledge is ‘read’. Documents may be digital or a soft copy, say in the form of an image or pdf, but not all digital documents are created equally. True digital transformation doesn’t mean digitalising old ways of working. It means harnessing technology to do better things. In the case of knowledge, ‘digital’ really means machine-readable.

True digital transformation doesn’t mean digitalising old ways of working. It means harnessing technology to do better things

Planning legislation

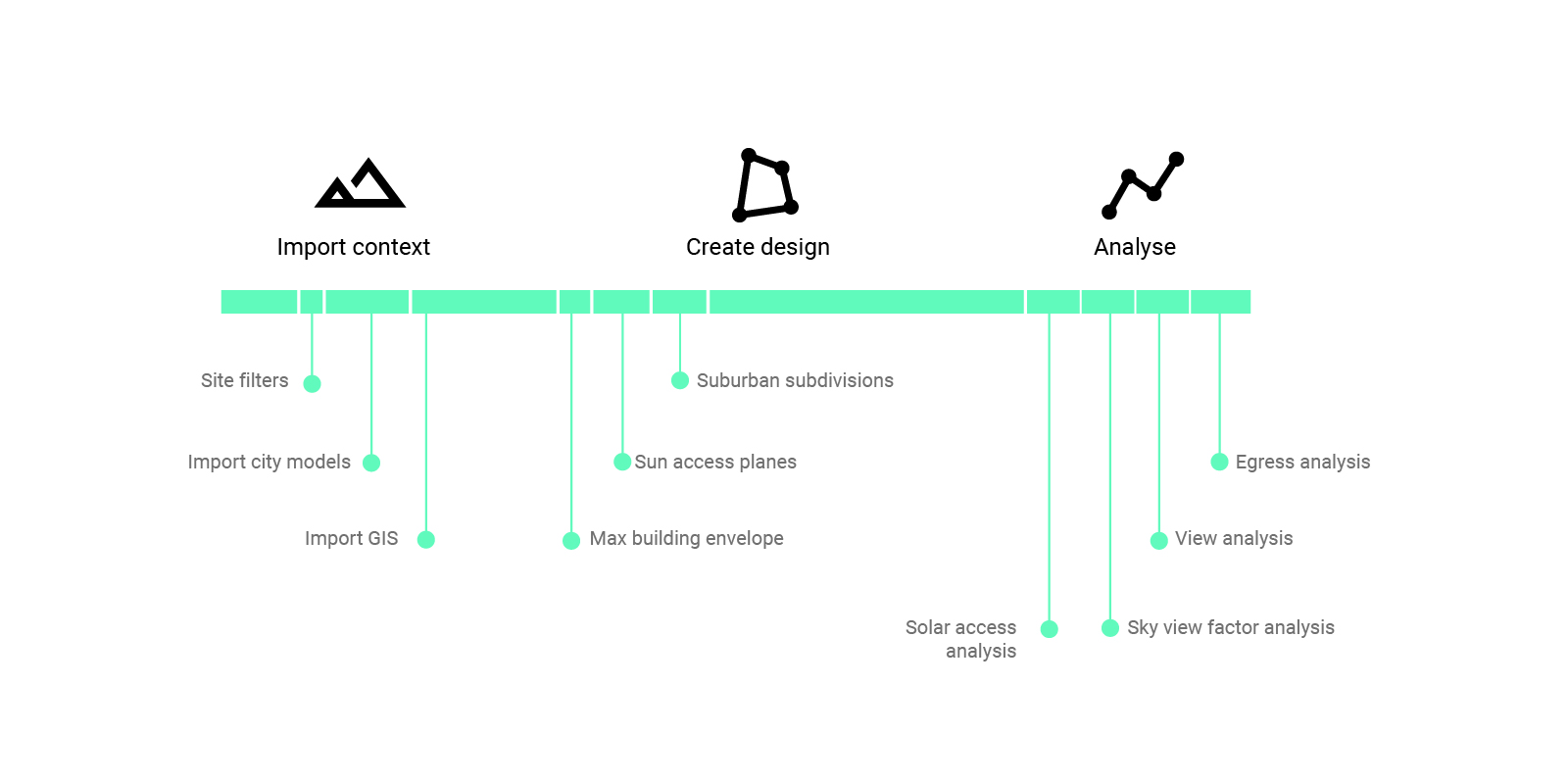

Consider planning legislation, for example. Despite digital twin initiatives by state and federal governments, most planning requirements are yet to be captured in a machine-readable format. Instead, they exist as text on a website and are often contradictory or open for interpretation.

GIS files supplied by governments capture some of the requirements, but the textual documents take precedents. And since textual documents are open to interpretation, even current text recognition software would fail to understand the requirements. A far better solution is the codification of the legislation, as we’ve demonstrated with MetricMonkey. Here, the algorithm creates the digital model which can form the ‘single source of truth’, avoiding ambiguities and duplication of effort.

Building regulations

Or consider building regulations. To validate a design’s compliance, one needs to read volumes and volumes of building code even though many requirements are prescriptive, known as ‘Deemed-To-Satisfy’ solutions. Placing the regulations onto a website may make them more accessible and ‘digital’, but it doesn’t absolve the need for them to be read by a human. Again, a better solution would be to have an official government portal to validate designs based on in-built rules. We’ve already demonstrated how code can verify individual requirements, such as stair compliance, solar access, and minimum area requirements. So how does one capture knowledge for it to be machine-readable?

Knowledge elicitation

In ‘Harry Potter’, Hogwarts’ headmaster Albus Dumbledore uses a device called a ‘Pensive’ to store thoughts. As he explains, “One simply siphons the excess thoughts from one’s mind, pours them into the basin, and examines them at one’s leisure. It becomes easier to spot patterns and links, you understand, when they are in this form.”5 But for the rest of us Muggles who lack any sort of magical ability, we must rely on what is known as ‘knowledge elicitation’.

Knowledge elicitation is the process whereby a knowledge engineer works with a domain expert, such as an architect or engineer, to extract knowledge before codifying it into a machine-readable format. Needless to say, this is not a trivial process as knowledge can be both explicit and tacit.

Explicit versus tacit knowledge

‘Explicit’ knowledge is knowledge that is easy to articulate. Planning legislation and building regulations, as presented above, are examples of explicit knowledge which can be easily codified. However, many professionals consider their knowledge not to be easily articulated and instead rely on intuitions. This is known as ‘tacit’ knowledge. But through introspection and with the support of knowledge elicitation specialists, they often find that they can model their expertise, typically in the form of an algorithm.

For many professionals, the idea that their job can be automated seems preposterous. Architecture or engineering is far too complex to be automated. And it’s true. If professions are considered some amorphous, all-encompassing black-box process, then yes, it will take some time for technology to replace professionals. However, if we are honest with ourselves and take a deep look at what professionals do, it’s clear that much of what professionals do can be broken down into discrete tasks which can be automated.

Since algorithms understand only what they are explicitly told, care should be taken in the codification process to ensure accuracy. In particular, it is crucial to be aware of cognitive biases and the limitations of experience as the foundation of knowledge acquisition.

The myth of experience

If I have seen further it is by standing on the shoulders of giants.

-Isaac Newton

Professionals often profess their knowledge by stating how many years of experience they have; “I am an experienced architect with over ten years experience.” But how reliable is experience when it comes to knowledge acquisition? Much research has been done into the field, and the results may surprise you.

Instead of giving us the right answer, experience often reinforces the wrong ones. This is because professional learning is often undertaken within irregular environments known as ‘wicked environments’. As such, we can make the same mistakes repeatedly without even realising that we have a problem.6 Take, for example, overtime in architectural practice. Study after study has shown how overtime causes reduced cognitive abilities, as well as increased errors and burnout. Yet despite the overwhelming scientific research of the adverse outcomes for both staff and the organisation, staff are promoted based on the overtime performed. Of course, this is just one example. The day-to-day workings of AEC professionals are littered with such cases. So when can experience be relied on?

The Nobel Prize-winning psychologist and economist Daniel Kahneman suggested that two conditions must be satisfied for intuition to be valid. Firstly, there must be a sufficiently regular environment to be predictable. And secondly, there must be an opportunity to learn these regularities through prolonged practice.7 Valid expert intuition is therefore dependent on the quality and speed of feedback and sufficient opportunity to practice. Given the numerous variables and complexity of projects, much of what AEC professionals do doesn’t satisfy these requirements. Put bluntly, most likely, the experience and intuitions of AEC professionals are unlikely to be valid.

Rethink and unlearn

Progress is impossible without change; and those who cannot change their minds cannot change anything.

George Bernard Shaw

As well as providing ‘the illusion of validity’, experience can also constrain us to prefer certain choices, processes, or actions even when they become obsolete or irrelevant.8 In fact, Adam Grant has dedicated an entire book on the importance of rethinking and unlearning. Grant claims, “Intelligence is traditionally viewed as the ability to think and learn. Yet in a turbulent world, there’s another set of cognitive skills that might matter more: the ability to rethink and unlearn.”9 He continues, “We laugh at people who still use Windows 95, yet we still cling to opinions that we formed in 1995. We listen to views that make us feel good, instead of ideas that make us think hard.”10 Since knowledge can evolve, so must we. If not, we may end up solving the wrong problems, using inadequate methods, and failing to achieve our objectives.

The AI fallacy & technological myopia

Some may argue that even at the jobs-to-be-done level, AI will never be able to ‘think’ like a professional and, therefore, will never be able to automate the task. This is known as the ‘AI fallacy‘ and is the mistaken belief that the only way to develop systems that perform tasks at the level of experts is to replicate the thinking process of human specialists. This is a bit like saying that the only way to fly a plane is to flap wings like a bird.11 There are other ways that we can be ‘smart’ without trying to replicate how an expert thinks. Brute force computation, for example, may not be elegant or sophisticated, but if it can achieve the same output, does the process even matter?

Another tendency professionals may fall victim to is to underestimate the potential of tomorrow’s applications by evaluating them in terms of today’s enabling technologies. This cognitive bias is known as ‘technological myopia‘, and it can hinder how and where organisations invest their resources. As Moore’s Law predicted, computational power is exponential, doubling every two years. To put that in context, from 1950 to 2000 computational power increased roughly by a factor of 10 billion. So just because we have insufficient computational power today doesn’t mean that it won’t exist tomorrow.12

Technological unemployment

Even if professionals accept that their knowledge can be codified, many still may have an aversion to technology. The most obvious and frequently stated is the fear that machines will take their jobs. Known as ‘technological unemployment‘, the British economist John Maynard Keyes popularised the term in 1930. But the underlying sentiment dates further back.

In the 19th century, the Luddites smashed a set of framing machines to protect against manufacturers who used machines in what they called “a fraudulent and deceitful manner” to get around standard labour practices. The group is believed to have taken their name from the weaver, Ned Ludd. Today, we still call our technologically disinclined contemporaries ‘Luddites’.13

The general perception of technological unemployment is that of low-skilled workers being replaced by machines. But Ned Ludd was a skilled worker of his age, not an unskilled one.14 These new machines were ‘de-skilling’, making it easier for less-skilled people to produce high-quality wares that would have required skilled workers in the past. But as Susskind reassures, “even at the century’s end, tasks are likely to remain that are either hard to automate, unprofitable to automate, or possible and profitable to automate but which we still prefer people to do.”15

Machine bias

Additional technological resistance also comes in the form of a machine bias. People consistently prefer human judgement – their own or someone else’s – to algorithms, even if they are less accurate. Some regard the idea of algorithmic decision making as dehumanising and as an abdication of their responsibility.16 As Kahneman describes:

Resistance to algorithms, or algorithm aversion, does not always manifest itself in a blanket refusal to adopt new decision support tools. More often, people are willing to give an algorithm a chance but stop trusting it as soon as they see that it makes mistakes… As humans, we are keenly aware that we make mistakes, but that is a privilege we are not prepared to share. We expect machines to be perfect. If this expectation is violated, we discard them. Because of this intuitive expectation, however, people are likely to distrust algorithms and keep using their judgement, even when this choice produces demonstrably inferior results.17

To see how the cause of the mistake matters, consider a self-driving car. Even though autonomous vehicles may be far safer than conventional cars, a single crash will come under far greater scrutiny, undermining confidence in their superior safety. The question then is, even if we know algorithms make mistakes, but human judgement repeatedly makes even more mistakes, whom should we trust?

Along the same lines, some may resist technology simply because of the craft involved. This tendency can be seen in price premiums for barrister-made coffee or hand-made clothing. Even though tasks can be automated at a low cost, it doesn’t necessarily mean that people will want them. For the AEC industry, it is clear that many tasks may remain craft-based. But that shouldn’t preclude the automation of those tasks which can and should be automated.

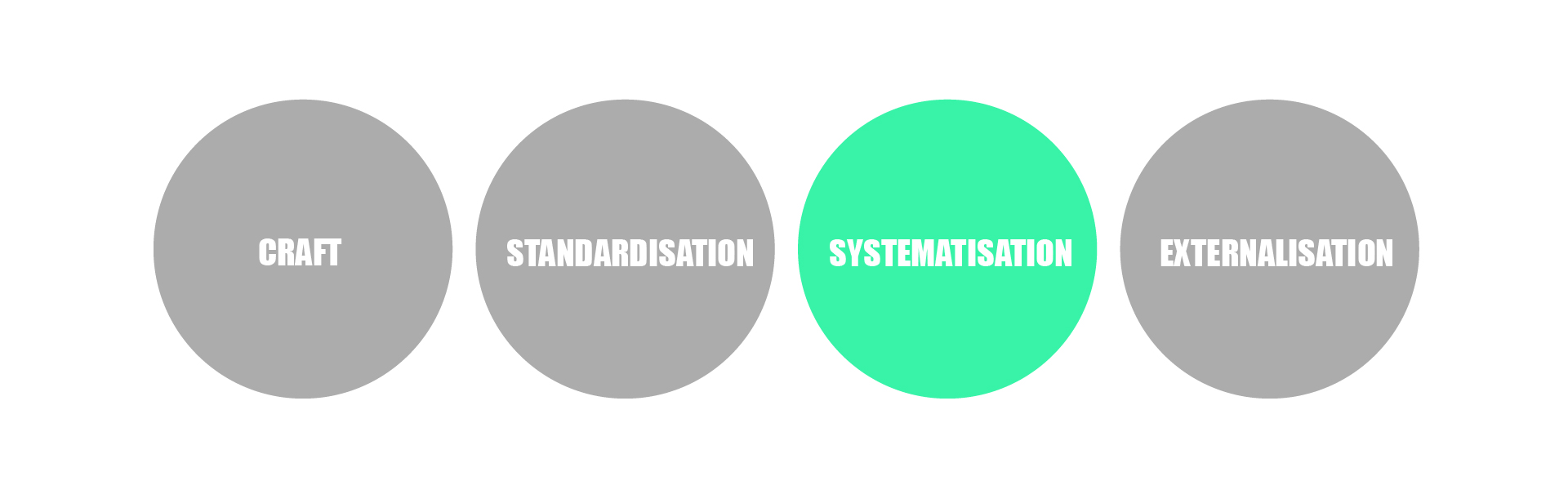

The evolution of professional work

In the future, machines will not do everything, but they will do more. New technologies will continue to take on tasks we thought only human beings would ever do. As professional work evolves, we’ll witness a fundamental transformation in our understanding of what a professional is. According to the Susskinds, market forces, technological advances and human ingenuity will drive professional work from craft to standardisation to systematisation to finally, externalisation.18

Craft

In this conceptual model, craft is the quintessential approach to professional work, tailoring and customising their work for each project. This romantic notion is the one sold to university students. But in practice – save for very few projects and architects – craft is few and far between. This is not to say that craft isn’t important, simply that it makes up only a very small portion of the output.

Standardisation

The move towards standardisation is already well underway, with practical expertise routinised for later reuse. This shift has been driven not by cost-cutting but rather as a way of avoiding errors and ensuring consistency across work. This shift is materialised through checklists, procedure manuals, and standards and is very much the BIM consultant’s narrative.

Systematisation

The third step is systematisation, where systems are developed to assist experts or replace them altogether. Where standardisation involves reducing tasks to reusable paper-based routines, systematisation involves codifying knowledge in a machine-readable format. This is the approach Parametric Monkey takes with our clients, and we’re witnessing more and more organisations embracing the process for improved accuracy and consistency.

Externalisation

The final step is externalisation. While systematisation is based on tools and systems for use within the organisation or profession, the externalisation phase will see these tools and systems made directly to end-users. This democratisation of knowledge can be seen already in various industries outside of the AEC industry. Quickbooks and Xero, for example, are replacing accountants, and Massive Open Online Courses (MOOCs) are disrupting higher education. In the AEC industry, generative design is becoming more and more pervasive. But since construction is a costly and complicated process, it is unclear to what extent such technology will be externalised to laypeople outside of the profession or industry.

Conclusion

Professional knowledge has remained a distinctly human process, stored in individual brains and disseminated via books and documents. But this limitation stems not from the nature of knowledge itself but from the paper-based medium in which it has been captured. True digital transformation doesn’t mean digitalising old ways of working. It means harnessing technology to do better things. And one of those things is capturing knowledge for the digital age. Unlike Dumbledore, we don’t have the affordance of a Pensive. But with the help of specialists, organisations can once and for all encode their knowledge into algorithms to finally achieve automation at scale.

References

1 Rowling, J. (2004). Harry Potter and the goblet of fire. Bloomsbury Publishing, London, p.648.

2 Ries, E. (2011). The lean startup: How constant innovation creates radically successful businesses. Penguin, London, pp.229-244.

3 Ackoff, R. (1988). From data to wisdom. In Journal of applied systems analysis, vol. 16, pp.3-9.

4 Weinberger, D. (2014). Too big to know: Rethinking knowledge now that the facts aren’t the facts, experts are everywhere, and the smartest person in the room is the room. Basic Books, New York, p.95.

5 Rowling, J. (2004). Harry Potter and the goblet of fire, Bloomsbury Publishing, London, p.649.

6 Soyer, E. & Hogarth, R. (2020). The myth of experience: Why we learn the wrong lessons, and ways to correct them. Hachette Book Group, New York, p.5.

7 Kahneman, D. (2011). Thinking, fast and slow. Penguin Books, Great Britain, p.240.

8 Soyer, E. & Hogarth, R. (2020). The myth of experience: Why we learn the wrong lessons, and ways to correct them. Hachette Book Group, New York, p.14.

9 Grant, A. (2021). Think again: The power of knowing what you don’t know. WH Allen, London, p.2.

10 Grant, A. (2021). Think again: The power of knowing what you don’t know. WH Allen, London, p.4.

11 Susskind, D. (2020). A world without work: Technology, automation, and how we should respond. Penguin Books, London, p.63.

12 Susskind, D. (2020). A world without work: Technology, automation, and how we should respond. Penguin Books, London, p.30.

13 Susskind, D. (2020). A world without work: Technology, automation, and how we should respond. Penguin Books, London, p.16.

14 Susskind, D. (2020). A world without work: Technology, automation, and how we should respond. Penguin Books, London, p.35.

15 Susskind, D. (2020). A world without work: Technology, automation, and how we should respond. Penguin Books, London, p.4.

16 Kahneman, D. et al. (2021). Noise: A flaw in human judgment. William Collins, London, p.134.

17 Kahneman, D. et al. (2021). Noise: A flaw in human judgment. William Collins, London, p.135.

18 Susskind, R. & Susskind, D. (2017). The future of the professions: How technology will transform the work of human experts. Oxford University Press, Oxford, p.196.

2 Comments

Matt Bishop

JK Rowling and D Kahneman referenced in the same article!

Nice article Paul, I think the Pensive analogy is very apt, although AI can work to not only capture your own thoughts, but potentially all your colleagues thoughts as well! I do very much like the idea of the engineering AI Algorithm, trained on a database of past and present structural designs, that is able to say “hmmmm, 80% of your colleagues would have designed a beam that was twice the size of that one…”

Paul Wintour

Thanks Matt. From the Harry Potter Wiki: “Owing to the highly personal nature of extracted memories, and the potential for abuse, most Pensieves were entombed with their owners along with the memories they contain. Some witches and wizards would pass on their Pensive/memories to another person, as is the case with the Hogwarts Pensieve.” So absolutely, not just capturing one’s own thoughts but everyone’s in the organisation. And structural design is a prime candidate for this.